I'm feeling like writing a very long explanation of a very small change again. Some folks have told me they enjoy my attempts to detail the entire step-by-step process of debugging some somewhat complex problem, so sit back, folks, and enjoy...The Tale Of The Two-Day, One-Character Patch!

Recently we landed Python 3.6 in Fedora Rawhide. A Python version bump like that requires all Python-dependent packages in the distribution to be rebuilt. As usually happens, several packages failed to rebuild successfully, so among other work, I've been helping work through the list of failed packages and fixing them up.

Two days ago, I reached python-deap. As usual, I first simply tried a mock build of the package: sometimes it turns out we already fixed whatever had previously caused the build to fail, and simply retrying will make it work. But that wasn't the case this time.

The build failed due to build dependencies not being installable - python2-pypandoc, in this case. It turned out that this depends on pandoc-citeproc, and that wasn't installable because a new ghc build had been done without rebuilds of the set of pandoc-related packages that must be rebuilt after a ghc bump. So I rebuilt pandoc, and ghc-aeson-pretty (an updated version was needed to build an updated pandoc-citeproc which had been committed but not built), and finally pandoc-citeproc.

With that done, I could do a successful scratch build of python-deap. I tweaked the package a bit to enable the test suites - another thing I'm doing for each package I'm fixing the build of, if possible - and fired off an official build.

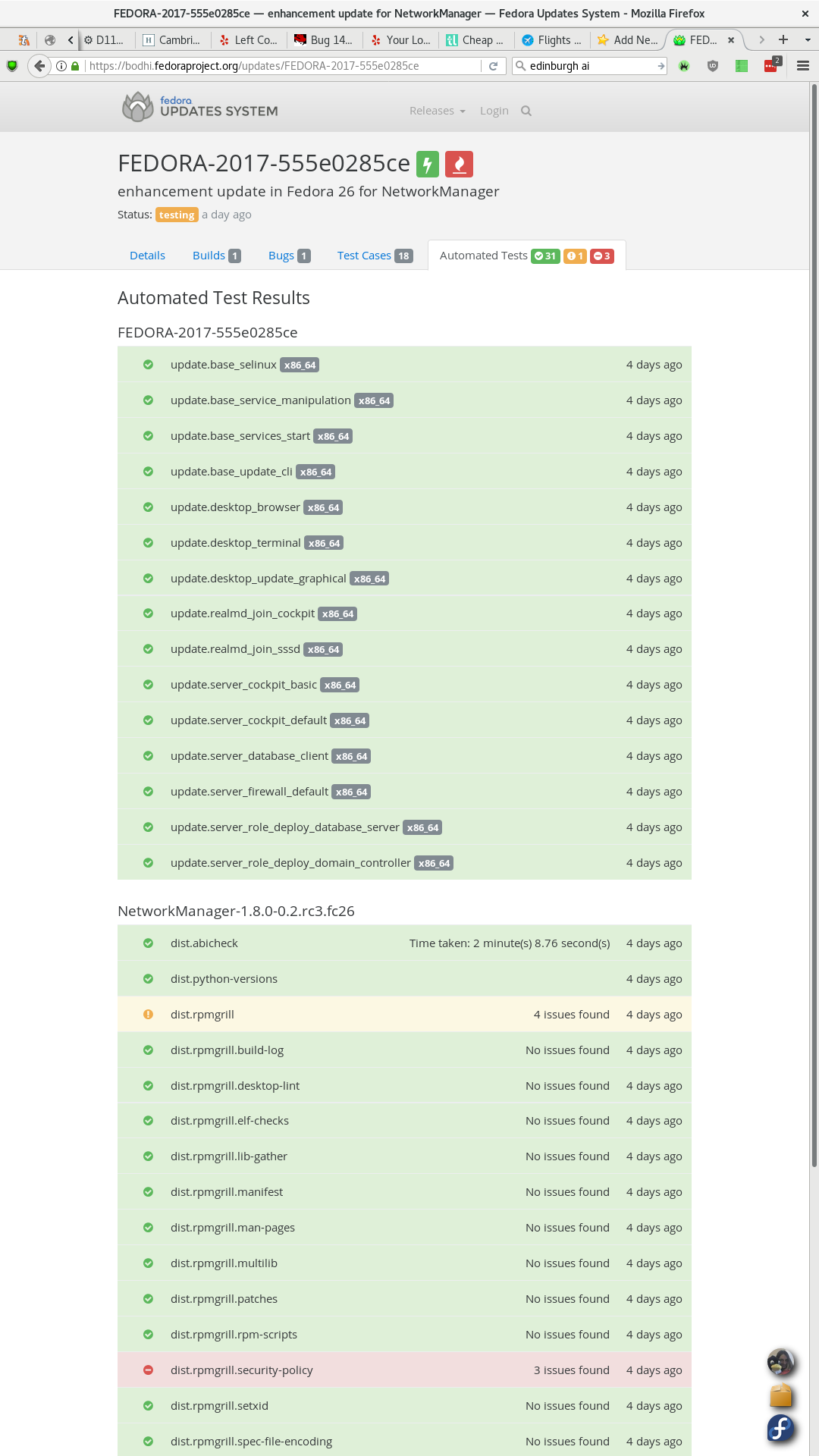

Now you may notice that this looks a bit odd, because all the builds for the different arches succeeded (they're green), but the overall 'State' is "failed". What's going on there? Well, if you click "Show result", you'll see this:

BuildError: The following noarch package built differently on different architectures: python-deap-doc-1.0.1-2.20160624git232ed17.fc26.noarch.rpm

rpmdiff output was:

error: cannot open Packages index using db5 - Permission denied (13)

error: cannot open Packages database in /var/lib/rpm

error: cannot open Packages database in /var/lib/rpm

removed /usr/share/doc/python-deap/html/_images/cma_plotting_01_00.png

removed /usr/share/doc/python-deap/html/examples/es/cma_plotting_01_00.hires.png

removed /usr/share/doc/python-deap/html/examples/es/cma_plotting_01_00.pdf

removed /usr/share/doc/python-deap/html/examples/es/cma_plotting_01_00.png

So, this is a good example of where background knowledge is valuable. Getting from step to step in this kind of debugging/troubleshooting process is a sort of combination of logic, knowledge and perseverance. Always try to be logical and methodical. When you start out you won't have an awful lot of knowledge, so you'll need a lot of perseverance; hopefully, the longer you go on, the more knowledge you'll pick up, and thus the less perseverance you'll need!

In this case the error is actually fairly helpful, but I also know a bit about packages (which helps) and remembered a recent mailing list discussion. Fedora allows arched packages with noarch subpackages, and this is how python-deap is set up: the main packages are arched, but there is a python-deap-docs subpackage that is noarch. We're concerned with that package here. I recalled a recent mailing list discussion of this "built differently on different architectures" error.

As discussed in that thread, we're failing a Koji check specific to this kind of package. If all the per-arch builds succeed individually, Koji will take the noarch subpackage(s) from each arch and compare them; if they're not all the same, Koji will consider this an error and fail the build. After all, the point of a noarch package is that its contents are the same for all arches and so it shouldn't matter which arch build we take the noarch subpackage from. If it comes out different on different arches, something is clearly up.

So this left me with the problem of figuring out which arch was different (it'd be nice if the Koji message actually told us...) and why. I started out just looking at the build logs for each arch and searching for 'cma_plotting'. This is actually another important thing: one of the most important approaches to have in your toolbox for this kind of work is just 'searching for significant-looking text strings'. That might be a grep or it might be a web search, but you'll probably wind up doing a lot of both. Remember good searching technique: try to find the most 'unusual' strings you can to search for, ones for which the results will be strongly correlated with your problem. This quickly told me that the problematic arch was ppc64. The 'removed' files were not present in that build, but they were present in the builds for all other arches.

So I started looking more deeply into the ppc64 build log. If you search for 'cma_plotting' in that file, you'll see the very first result is "WARNING: Exception occurred in plotting cma_plotting". That sounds bad! Below it is a long Python traceback - the text starting "Traceback (most recent call last):".

So what we have here is some kind of Python thing crashing during the build. If we quickly compare with the build logs on other arches, we don't see the same thing at all - there is no traceback in those build logs. Especially since this shows up right when the build process should be generating the files we know are the problem (the cma_plotting files, remember), we can be pretty sure this is our culprit.

Now this is a pretty big scary traceback, but we can learn some things from it quite easily. One is very important: we can see quite easily what it is that's going wrong. If we look at the end of the traceback, we see that all the last calls involve files in /usr/lib64/python2.7/site-packages/matplotlib. This means we're dealing with a Python module called matplotlib. We can quite easily associate that with the package python-matplotlib, and now we have our next suspect.

If we look a bit before the traceback, we can get a bit more general context of what's going on, though it turns out not to be very important in this case. Sometimes it is, though. In this case we can see this:

+ sphinx-build-2 doc build/html

Running Sphinx v1.5.1

Again, background knowledge comes in handy here: I happen to know that Sphinx is a tool for generating documentation. But if you didn't already know that, you should quite easily be able to find it out, by good old web search. So what's going on is the package build process is trying to generate python-deap's documentation, and that process uses this matplotlib library, and something is going very wrong - but only on ppc64, remember - in matplotlib when we try to generate one particular set of doc files.

So next I start trying to figure out what's actually going wrong in matplotlib. As I mentioned, the traceback is pretty long. This is partly just because matplotlib is big and complex, but it's more because it's a fairly rare type of Python error - an infinite recursion. You'll see the traceback ends with many, many repetitions of this line:

File "/usr/lib64/python2.7/site-packages/matplotlib/mathtext.py", line 861, in _get_glyph

return self._get_glyph('rm', font_class, sym, fontsize)

followed by:

File "/usr/lib64/python2.7/site-packages/matplotlib/mathtext.py", line 816, in _get_glyph

uniindex = get_unicode_index(sym, math)

File "/usr/lib64/python2.7/site-packages/matplotlib/mathtext.py", line 87, in get_unicode_index

if symbol == '-':

RuntimeError: maximum recursion depth exceeded in cmp

What 'recursion' means is pretty simple: it just means that a function can call itself. A common example of where you might want to do this is if you're trying to walk a directory tree. In Python-y pseudo-code it might look a bit like this:

def read_directory(directory):

print(directory.name)

for entry in directory:

if entry is file:

print(entry.name)

if entry is directory:

read_directory(entry)

To deal with directories nested in other directories, the function just calls itself. The danger is if you somehow mess up when writing code like this, and it winds up in a loop, calling itself over and over and never escaping: this is 'infinite recursion'. Python, being a nice language, notices when this is going on, and bails after a certain number of recursions, which is what's happening here.

So now we know where to look in matplotlib, and what to look for. Let's go take a look! matplotlib, like most everything else in the universe these days, is in github, which is bad for ecosystem health but handy just for finding stuff. Let's go look at the function from the backtrace.

Well, this is pretty long, and maybe a bit intimidating. But an interesting thing is, we don't really need to know what this function is for - I actually still don't know precisely (according to the name it should be returning a 'glyph' - a single visual representation for a specific character from a font - but it actually returns a font, the unicode index for the glyph, the name of the glyph, the font size, and whether the glyph is italicized, for some reason). What we need to concentrate on is the question of why this function is getting in a recursion loop on one arch (ppc64) but not any others.

First let's figure out how the recursion is actually triggered - that's vital to figuring out what the next step in our chain is. The line that triggers the loop is this one:

return self._get_glyph('rm', font_class, sym, fontsize)

That's where it calls itself. It's kinda obvious that the authors expect that call to succeed - it shouldn't run down the same logical path, but instead get to the 'success' path (the return font, uniindex, symbol_name, fontsize, slanted line at the end of the function) and thus break the loop. But on ppc64, for some reason, it doesn't.

So what's the logic path that leads us to that call, both initially and when it recurses? Well, it's down three levels of conditionals:

if not found_symbol:

if self.cm_fallback:

<other path>

else:

if fontname in ('it', 'regular') and isinstance(self, StixFonts):

return self._get_glyph('rm', font_class, sym, fontsize)

So we only get to this path if found_symbol is not set by the time we reach that first if, then if self.cm_fallback is not set, then if the fontname given when the function was called was 'it' or 'regular' and if the class instance this function (actually method) is a part of is an instance of the StixFonts class (or a subclass). Don't worry if we're getting a bit too technical at this point, because I did spend a bit of time looking into those last two conditions, but ultimately they turned out not to be that significant. The important one is the first one: if not found_symbol.

By this point, I'm starting to wonder if the problem is that we're failing to 'find' the symbol - in the first half of the function - when we shouldn't be. Now there are a couple of handy logical shortcuts we can take here that turned out to be rather useful. First we look at the whole logic flow of the found_symbol variable and see that it's a bit convoluted. From the start of the function, there are two different ways it can be set True - the if self.use_cmex block and then the 'fallback' if not found_symbol block after that. Then there's another block that starts if found_symbol: where it gets set back to False again, and another lookup is done:

if found_symbol:

(...)

found_symbol = False

font = self._get_font(new_fontname)

if font is not None:

glyphindex = font.get_char_index(uniindex)

if glyphindex != 0:

found_symbol = True

At first, though, we don't know if we're even hitting that block, or if we're failing to 'find' the symbol earlier on. It turns out, though, that it's easy to tell - because of this earlier block:

if not found_symbol:

try:

uniindex = get_unicode_index(sym, math)

found_symbol = True

except ValueError:

uniindex = ord('?')

warn("No TeX to unicode mapping for '%s'" %

sym.encode('ascii', 'backslashreplace'),

MathTextWarning)

Basically, if we don't find the symbol there, the code logs a warning. We can see from our build log that we don't see any such warning, so we know that the code does initially succeed in finding the symbol - that is, when we get to the if found_symbol: block, found_symbol is True. That logically means that it's that block where the problem occurs - we have found_symbol going in, but where that block sets it back to False then looks it up again (after doing some kind of font substitution, I don't know why, don't care), it fails.

The other thing I noticed while poking through this code is a later warning. Remember that the infinite recursion only happens if fontname in ('it', 'regular') and isinstance(self, StixFonts)? Well, what happens if that's not the case is interesting:

if fontname in ('it', 'regular') and isinstance(self, StixFonts):

return self._get_glyph('rm', font_class, sym, fontsize)

warn("Font '%s' does not have a glyph for '%s' [U+%x]" %

(new_fontname,

sym.encode('ascii', 'backslashreplace').decode('ascii'),

uniindex),

MathTextWarning)

that is, if that condition isn't satisfied, instead of calling itself, the next thing the function does is log a warning. So it occurred to me to go and see if there are any of those warnings in the build logs. And, whaddayaknow, there are four such warnings in the ppc64 build log:

/usr/lib64/python2.7/site-packages/matplotlib/mathtext.py:866: MathTextWarning: Font 'rm' does not have a glyph for '1' [U+1d7e3]

MathTextWarning)

/usr/lib64/python2.7/site-packages/matplotlib/mathtext.py:867: MathTextWarning: Substituting with a dummy symbol.

warn("Substituting with a dummy symbol.", MathTextWarning)

/usr/lib64/python2.7/site-packages/matplotlib/mathtext.py:866: MathTextWarning: Font 'rm' does not have a glyph for '0' [U+1d7e2]

MathTextWarning)

/usr/lib64/python2.7/site-packages/matplotlib/mathtext.py:866: MathTextWarning: Font 'rm' does not have a glyph for '-' [U+2212]

MathTextWarning)

/usr/lib64/python2.7/site-packages/matplotlib/mathtext.py:866: MathTextWarning: Font 'rm' does not have a glyph for '2' [U+1d7e4]

MathTextWarning)

but there are no such warnings in the logs for other arches. That's really rather interesting. It makes one possibility very unlikely: that we do reach the recursed call on all arches, but it fails on ppc64 and succeeds on the other arches. It's looking far more likely that the problem is the "re-discovery" bit of the function - the if found_symbol: block where it looks up the symbol again - is usually working on other arches, but failing on ppc64.

So just by looking at the logical flow of the function, particularly what happens in different conditional branches, we've actually been able to figure out quite a lot, without knowing or even caring what the function is really for. By this point, I was really focusing in on that if found_symbol: block. And that leads us to our next suspect. The most important bit in that block is where it actually decides whether to set found_symbol to True or not, here:

font = self._get_font(new_fontname)

if font is not None:

glyphindex = font.get_char_index(uniindex)

if glyphindex != 0:

found_symbol = True

I didn't actually know whether it was failing because self._get_font didn't find anything, or because font.get_char_index returned 0. I think I just played a hunch that get_char_index was the problem, but it wouldn't be too difficult to find out by just editing the code a bit to log a message telling you whether or not font was None, and re-running the test suite.

Anyhow, I wound up looking at get_char_index, so we need to go find that. You could work backwards through the code and figure out what font is an instance of so you can find it, but that's boring: it's far quicker just to grep the damn code. If you do that, you get various results that are calls of it, then this:

src/ft2font_wrapper.cpp:const char *PyFT2Font_get_char_index__doc__ =

src/ft2font_wrapper.cpp: "get_char_index()\n"

src/ft2font_wrapper.cpp:static PyObject *PyFT2Font_get_char_index(PyFT2Font *self, PyObject *args, PyObject *kwds)

src/ft2font_wrapper.cpp: if (!PyArg_ParseTuple(args, "k:get_char_index", &ccode)) {

src/ft2font_wrapper.cpp: {"get_char_index", (PyCFunction)PyFT2Font_get_char_index, METH_VARARGS, PyFT2Font_get_char_index__doc__},

Which is the point at which I started mentally buckling myself in, because now we're out of Python and into C++. Glorious C++! I should note at this point that, while I'm probably a half-decent Python coder at this point, I am still pretty awful at C(++). I may be somewhat or very wrong in anything I say about it. Corrections welcome.

So I buckled myself in and went for a look at this ft2font_wrapper.cpp thing. I've seen this kind of thing a couple of times before, so by squinting at it a bit sideways, I could more or less see that this is what Python calls an extension module: basically, it's a Python module written in C or C++. This gets done if you need to create a new built-in type, or for speed, or - as in this case - because the Python project wants to work directly with a system shared library (in this case, freetype), either because it doesn't have Python bindings or because the project doesn't want to use them for some reason.

This code pretty much provides a few classes for working with Freetype fonts. It defines a class called matplotlib.ft2font.FT2Font with a method get_char_index, and that's what the code back up in mathtext.py is dealing with: that font we were dealing with is an FT2Font instance, and we're using its get_char_index method to try and 'find' our 'symbol'.

Fortunately, this get_char_index method is actually simple enough that even I can figure out what it's doing:

static PyObject *PyFT2Font_get_char_index(PyFT2Font *self, PyObject *args, PyObject *kwds)

{

FT_UInt index;

FT_ULong ccode;

if (!PyArg_ParseTuple(args, "I:get_char_index", &ccode)) {

return NULL;

}

index = FT_Get_Char_Index(self->x->get_face(), ccode);

return PyLong_FromLong(index);

}

(If you're playing along at home for MEGA BONUS POINTS, you now have all the necessary information and you can try to figure out what the bug is. If you just want me to explain it, keep reading!)

There's really not an awful lot there. It's calling FT_Get_Char_Index with a couple of args and returning the result. Not rocket science.

In fact, this seemed like a good point to start just doing a bit of experimenting to identify the precise problem, because we've reduced the problem to a very small area. So this is where I stopped just reading the code and started hacking it up to see what it did.

First I tweaked the relevant block in mathtext.py to just log the values it was feeding in and getting out:

font = self._get_font(new_fontname)

if font is not None:

glyphindex = font.get_char_index(uniindex)

warn("uniindex: %s, glyphindex: %s" % (uniindex, glyphindex))

if glyphindex != 0:

found_symbol = True

Sidenote: how exactly to just print something out to the console when you're building or running tests can vary quite a bit depending on the codebase in question. What I usually do is just look at how the project already does it - find some message that is being printed when you build or run the tests, and then copy that. Thus in this case we can see that the code is using this warn function (it's actually warnings.warn), and we know those messages are appearing in our build logs, so...let's just copy that.

Then I ran the test suite on both x86_64 and ppc64, and compared. This told me that the Python code was passing the same uniindex values to the C code on both x86_64 and ppc64, but getting different results back - that is, I got the same recorded uniindex values, but on x86_64 the resulting glyphindex value was always something larger than 0, but on ppc64, it was sometimes 0.

The next step should be pretty obvious: log the input and output values in the C code.

index = FT_Get_Char_Index(self->x->get_face(), ccode);

printf("ccode: %lu index: %u\n", ccode, index);

Another sidenote: one of the more annoying things with this particular issue was just being able to run the tests with modifications and see what happened. First, I needed an actual ppc64 environment to use. The awesome Patrick Uiterwijk of Fedora release engineering provided me with one. Then I built a .src.rpm of the python-matplotlib package, ran a mock build of it, and shelled into the mock environment. That gives you an environment with all the necessary build dependencies and the source and the tests all there and prepared already. Then I just copied the necessary build, install and test commands from the spec file. For a simple pure-Python module this is all usually pretty easy and you can just check the source out and do it right in your regular environment or in a virtualenv or something, but for something like matplotlib which has this C++ extension module too, it's more complex. The spec builds the code, then installs it, then runs the tests out of the source directory with PYTHONPATH=BUILDROOT/usr/lib64/python2.7/site-packages , so the code that was actually built and installed is used for the tests. When I wanted to modify the C part of matplotlib, I edited it in the source directory, then re-ran the 'build' and 'install' steps, then ran the tests; if I wanted to modify the Python part I just edited it directly in the BUILDROOT location and re-ran the tests. When I ran the tests on ppc64, I noticed that several hundred of them failed with exactly the bug we'd seen in the python-deap package build - this infinite recursion problem. Several others failed due to not being able to find the glyph, without hitting the recursion. It turned out the package maintainer had disabled the tests on ppc64, and so Fedora 24+'s python-matplotlib has been broken on ppc64 since about April).

So anyway, with that modified C code built and used to run the test suite, I finally had a smoking gun. Running this on x86_64 and ppc64, the logged ccode values were totally different. The values logged on ppc64 were huge. But as we know from the previous logging, there was no difference in the value when the Python code passed it to the C code (the uniindex value logged in the Python code).

So now I knew: the problem lay in how the C code took the value from the Python code. At this point I started figuring out how that worked. The key line is this one:

if (!PyArg_ParseTuple(args, "I:get_char_index", &ccode)) {

That PyArg_ParseTuple function is what the C code is using to read in the value that mathtext.py calls uniindex and it calls ccode, the one that's somehow being messed up on ppc64. So let's read the docs!

This is one unusual example where the Python docs, which are usually awesome, are a bit difficult, because that's a very thin description which doesn't provide the references you usually get. But all you really need to do is read up - go back to the top of the page, and you get a much more comprehensive explanation. Reading carefully through the whole page, we can see pretty much what's going on in this call. It basically means that args is expected to be a structure representing a single Python object, a number, which we will store into the C variable ccode. The tricky bit is that second arg, "I:get_char_index". This is the 'format string' that the Python page goes into a lot of helpful detail about.

As it tells us, PyArg_ParseTuple "use[s] format strings which are used to tell the function about the expected arguments...A format string consists of zero or more “format units.” A format unit describes one Python object; it is usually a single character or a parenthesized sequence of format units. With a few exceptions, a format unit that is not a parenthesized sequence normally corresponds to a single address argument to these functions." Next we get a list of the 'format units', and I is one of those:

I (integer) [unsigned int]

Convert a Python integer to a C unsigned int, without overflow checking.

You might also notice that the list of format units include several for converting Python integers to other things, like i for 'signed int' and h for 'short int'. This will become significant soon!

The :get_char_index bit threw me for a minute, but it's explained further down:

"A few other characters have a meaning in a format string. These may not occur inside nested parentheses. They are: ... : The list of format units ends here; the string after the colon is used as the function name in error messages (the “associated value” of the exception that PyArg_ParseTuple() raises)." So in our case here, we have only a single 'format unit' - I - and get_char_index is just a name that'll be used in any error messages this call might produce.

So now we know what this call is doing. It's saying "when some Python code calls this function, take the args it was called with and parse them into C structures so we can do stuff with them. In this case, we expect there to be just a single arg, which will be a Python integer, and we want to convert it to a C unsigned integer, and store it in the C variable ccode."

(If you're playing along at home but you didn't get it earlier, you really should be able to get it now! Hint: read up just a few lines in the C code. If not, go refresh your memory about architectures...)

And once I understood that, I realized what the problem was. Let's read up just a few lines in the C code:

FT_ULong ccode;

Unlike Python, C and C++ are 'typed languages'. That just means that all variables must be declared to be of a specific type, unlike Python variables, which you don't have to declare explicitly and which can change type any time you like. This is a variable declaration: it's simply saying "we want a variable called ccode, and it's of type FT_ULong".

If you know anything at all about C integer types, you should know what the problem is by now (you probably worked it out a few paragraphs back). But if you don't, now's a good time to learn!

There are several different types you can use for storing integers in C: short, int, long, and possibly long long (depends on your arch). This is basically all about efficiency: you can only put a small number in a short, but if you only need to store small numbers, it might be more efficient to use a short than a long. Theoretically, when you use a short the compiler will allocate less memory than when you use an int, which uses less memory again than a long, which uses less than a long long. Practically speaking some of them wind up being the same size on some platforms, but the basic idea's there.

All the types have signed and unsigned variants. The difference there is simple: signed numbers can be negative, unsigned ones can't. Say an int is big enough to let you store 101 different values: a signed int would let you store any number between -50 and +50, while an unsigned int would let you store any number between 0 and 100.

Now look at that ccode declaration again. What is its type? FT_ULong. That ULong...sounds a lot like unsigned long, right?

Yes it does! Here, have a cookie. C code often declares its own aliases for standard C types like this; we can find Freetype's in its API documentation, which I found by the cunning technique of doing a web search for FT_ULong. That finds us this handy definition: "A typedef for unsigned long."

Aaaaaaand herein lies our bug! Whew, at last. As, hopefully, you can now see, this ccode variable is declared as an unsigned long, but we're telling PyArg_ParseTuple to convert the Python object such that we can store it as an unsigned int, not an unsigned long.

But wait, you think. Why does this seem to work OK on most arches, and only fail on ppc64? Again, some of you will already know the answer, good for you, now go read something else. ;) For the rest of you, it's all about this concept called 'endianness', which you might have come across and completely failed to understand, like I did many times! But it's really pretty simple, at least if we skate over it just a bit.

Consider the number "forty-two". Here is how we write it with numerals: 42. Right? At least, that's how most humans do it, these days, unless you're a particularly hardy survivor of the fall of Rome, or something. This means we humans are 'big-endian'. If we were 'little-endian', we'd write it like this: 24. 'Big-endian' just means the most significant element comes 'first' in the representation; 'little-endian' means the most significant element comes last.

All the arches Fedora supports except for ppc64 are little-endian. On little-endian arches, this error doesn't actually cause a problem: even though we used the wrong format unit, the value winds up being correct. On (64-bit) big-endian arches, however, it does cause a problem - when you tell PyArg_ParseTuple to convert to an unsigned long, but store the result into a variable that was declared as an unsigned int, you get a completely different value (it's multiplied by 2x32). The reasons for this involve getting into a more technical understanding of little-endian vs. big-endian (we actually have to get into the icky details of how values are really represented in memory), which I'm going to skip since this post is already long enough.

But you don't really need to understand it completely, certainly not to be able to spot problems like this. All you need to know is that there are little-endian and big-endian arches, and little-endian are far more prevalent these days, so it's not unusual for low-level code to have weird bugs on big-endian arches. If something works fine on most arches but not on one or two, check if the ones where it fails are big-endian. If so, then keep a careful eye out for this kind of integer type mismatch problem, because it's very, very likely to be the cause.

So now all that remained to do was to fix the problem. And here we go, with our one character patch:

diff --git a/src/ft2font_wrapper.cpp b/src/ft2font_wrapper.cpp

index a97de68..c77dd83 100644

--- a/src/ft2font_wrapper.cpp

+++ b/src/ft2font_wrapper.cpp

@@ -971,7 +971,7 @@ static PyObject *PyFT2Font_get_char_index(PyFT2Font *self, PyObject *args, PyObj

FT_UInt index;

FT_ULong ccode;

- if (!PyArg_ParseTuple(args, "I:get_char_index", &ccode)) {

+ if (!PyArg_ParseTuple(args, "k:get_char_index", &ccode)) {

return NULL;

}

There's something I just love about a one-character change that fixes several hundred test failures. :) As you can see, we simply change the I - the format unit for unsigned int - to k - the format unit for unsigned long. And with that, the bug is solved! I applied this change on both x86_64 and ppc64, re-built the code and re-ran the test suite, and observed that several hundred errors disappeared from the test suite on ppc64, while the x86_64 tests continued to pass.

So I was able to send that patch upstream, apply it to the Fedora package, and once the package build went through, I could finally build python-deap successfully, two days after I'd first tried it.

Bonus extra content: even though I'd fixed the python-deap problem, as I'm never able to leave well enough alone, it wound up bugging me that there were still several hundred other failures in the matplotlib test suite on ppc64. So I wound up looking into all the other failures, and finding several other similar issues, which got the failure count down to just two sets of problems that are too domain-specific for me to figure out, and actually also happen on aarch64 and ppc64le (they're not big-endian issues). So to both the people running matplotlib on ppc64...you're welcome ;)

Seriously, though, I suspect without these fixes, we might have had some odd cases where a noarch package's documentation would suddenly get messed up if the package happened to get built on a ppc64 builder.